In healthcare, human-in-the-loop (HITL) is widely used as a term… and poorly understood. Sometimes it gets reduced to “a physician validates it” and that is it. In practice, HITL is something else: it is the operational design that defines when and how a person intervenes, with clear responsibilities, traceable evidence, and mechanisms to prevent common risks such as overconfidence in automation.

If you are evaluating AI for a hospital, a primary care network (PHC), or a screening program (for example, diabetic retinopathy), this article gives you a simple framework to separate marketing from real implementation.

What human-in-the-loop is (and what it is NOT)

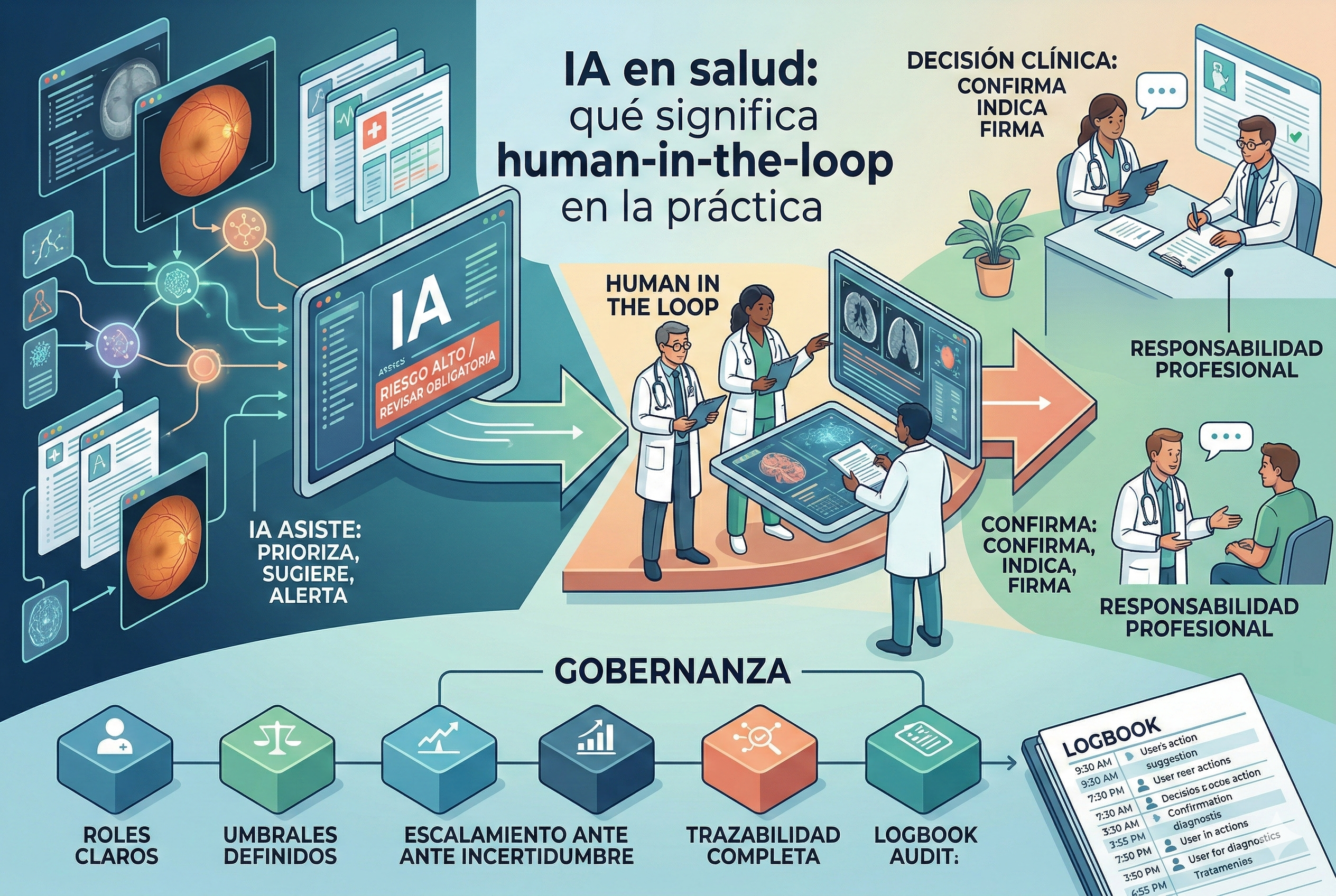

HITL means the system is designed so critical decisions are not “closed” by AI alone, but go through a defined human circuit: capture, verification, prioritization, review, reporting, and follow-up.

It is not HITL when: - A human only “signs off” without reviewing evidence. - There are no escalation criteria (everything goes through or nothing goes through). - There is no record of who made the decision, with what information, and under which model version. - AI is integrated in a way that pushes default acceptance (risk of automation bias).

The literature describes automation bias as the tendency to over-trust automated recommendations, even when they are wrong, especially under workload or time pressure (systematic review available on PubMed Central).

The 3 loops that matter in clinical AI

In real implementation, HITL is not a single step: it is three complementary loops.

1) Operations loop (day-to-day)

Defines what happens with each case: - Who captures the study (technician, nursing staff, etc.). - What AI validates (for example, image quality or triage). - Who decides the next step (refer, report, repeat capture, etc.). - What turnaround times are expected (internal SLAs).

In retina programs, this loop is critical because the bottleneck is usually ophthalmology: properly designed HITL protects specialist time without degrading clinical safety.

2) Safety and risk loop (before and after go-live)

Includes: - risk analysis (what can fail and how it is controlled), - planned testing, - clinical validation, - contingency plans.

Regulatory bodies and frameworks insist on thinking about AI across the full lifecycle (not only the pilot). As practical references, you can review: - Good Machine Learning Practice (GMLP) guiding principles for AI/ML-based medical devices (FDA): https://www.fda.gov/medical-devices/software-medical-device-samd/good-machine-learning-practice-medical-device-development-guiding-principles - GMLP principles document (PDF): https://www.fda.gov/media/153486/download - Ethics and governance guidance for AI in health (WHO): https://www.who.int/publications/i/item/9789240029200 - Clinical evaluation of SaMD (IMDRF): https://www.imdrf.org/sites/default/files/docs/imdrf/final/technical/imdrf-tech-170921-samd-n41-clinical-evaluation_1.pdf

3) Continuous improvement loop (production monitoring)

AI performance shifts for many reasons: population changes, new cameras, different protocols, and more. Mature HITL requires: - performance and process metrics, - error review (including false negatives), - drift monitoring, - and change governance (what is updated, when, and how it is communicated).

For AI risk management beyond healthcare, the NIST AI Risk Management Framework is a useful cross-domain framework: https://www.nist.gov/itl/ai-risk-management-framework

HITL design patterns that work (with concrete examples)

Pattern A: “AI filters, human confirms”

Useful when the cost of error is high. - AI prioritizes or suggests. - A professional confirms relevant cases. - Minimum reasoning is documented (not just “approved” without evidence).

Retina example: AI identifies high-risk cases for ophthalmology review, while cases without clear findings are handled with defined clinical rules (always with an escalation policy when uncertainty exists).

Pattern B: “AI-assisted capture + quality control”

Many failures are not diagnostic; they are data quality issues. - AI guides operators toward better capture. - If image quality is insufficient, capture is repeated immediately. - The rate of “ungradable studies” decreases.

This pattern relies on usability and safe-use processes: the FDA has a classic guidance on human factors for medical devices (highly applicable to clinical software): https://www.fda.gov/regulatory-information/search-fda-guidance-documents/applying-human-factors-and-usability-engineering-medical-devices

Pattern C: “Dual escalation: uncertainty + context”

Not everything should be decided by a score. - If AI is uncertain -> escalate. - If contextual factors exist (symptoms, comorbidities, history) -> escalate, even with a low score. - If there is human vs AI disagreement -> second read or audit.

Operational checklist: what to ask in a demo to know if HITL is real

Use these questions during procurement/evaluation:

1) Roles and permissions: who can capture, review, report, and audit?

2) Thresholds and escalation: what triggers human review (uncertainty, quality, finding)? Is it configurable?

3) Evidence and traceability: is there a record of who decided, when, with which model version, and on which data?

4) Error handling: how is a mis-prioritized case reported? Is there an improvement loop?

5) Workflow integration: does it reduce work or increase it? (watch for excessive alerts and fatigue)

6) Clinical validation: what public evidence is available? Is it based on populations comparable to LATAM?

7) Production monitoring: is there a metrics dashboard? How is drift detected?

How HITL is applied in Retinar (teleophthalmology for retina)

Retinar was designed from the start with a field-operable HITL approach, intended for Argentina and Latin America, where demand is high, specialist resources are limited, and equipment is heterogeneous.

In practical terms:

- Decentralized capture + assistance: studies can be captured in primary care or outreach campaigns, with a flow that minimizes recaptures and ensures quality.

- AI prediagnosis for prioritization: AI helps identify high-risk cases and accelerates referral, while the workflow is designed so specialists focus their time where they add value.

- Professional review and traceability: cases that require confirmation move to remote reporting, with full flow logging (who reviewed and what was decided).

- Multi-camera compatibility: in real programs, HITL also means adapting to the installed equipment base without breaking the process.

If your goal is to implement diabetic retinopathy and/or glaucoma-risk screening without saturating ophthalmology, this approach enables clinical safety and operational predictability, avoiding the “eternal pilot.”

Closing: HITL is not a feature, it is a work system

When HITL is well implemented: - operational friction goes down, - clinical trust goes up, - data quality improves, - and a program becomes scalable (especially for screening).

When HITL is poorly implemented: - overconfidence risk increases, - new bottlenecks are created, - or “automatic approvals” happen without evidence.

CTA: let’s bring this into a real pilot

Do you want to see a concrete example of HITL applied to teleophthalmology in the field (primary care, campaigns, or private networks), with clear operational metrics?

Contact us and we can schedule a Retinar demo to evaluate your workflow, your equipment, and a phased implementation plan: - Contact form on the website - Or message us to set up a pilot in your institution